The Twitter Whistleblower Story Is Worse Than You Think

When Twitter’s former head of security, Peter “Mudge” Zatko, blew the whistle on his ex-employers, it sparked a journalistic feeding frenzy. And, as tends to happen, those journalists tried to distill a complex story into its simplest and most compelling parts.

To begin, the 84 pages of Zatko’s redacted complaint were quickly divided into three general categories.

- Shoddy Security: Twitter suffered from “extreme, egregious” security shortcomings, which it failed to address even in response to an FTC settlement and numerous high-profile incidents.

- Spam and Bots: Twitter failed to provide its employees with meaningful resources to combat non-human accounts.

- Lack of Leadership: When Twitter’s leadership was confronted with the above problems, they were apathetic, ineffectual, or dishonest.

From there, the story got even narrower as coverage began to focus on issues two and three. That’s unsurprising, since Twitter’s bot issue–and the company’s lack of candor about it–is the basis of Elon Musk’s claim that he should be allowed to walk away from his acquisition deal. And a standoff between the notoriously polarizing World’s Richest Man and a scandal-plagued social media company is certainly newsworthy.

Still, it’s worth asking why the economic story is overshadowing the security one. Given the amount of sensitive data the site has on its users–including and especially journalists–and the fact that its security lapses have already caused global chaos, why aren’t we all more alarmed?

Why It’s Hard To Talk About the Twitter Security Story

The most obvious answer is that it’s tough for journalists to write about cybersecurity in ways that resonate with non-technical readers. Mudge’s allegation that over 30% of Twitter’s employee devices disabled automatic software and security updates is (or should be) horrifying to cybersecurity professionals. But that fact won’t hit nearly as hard if you’re a user who’s accustomed to clicking “remind me later” every time there’s an OS update. (And that “yawn” reaction is, of course, exactly why automatic updates are so important.)

But there’s a deeper reason why no one is treating Twitter’s security failures like a five-alarm fire: it’s because we don’t expect anything better. The general public has weathered years of stories about their personal data being mishandled by tech companies, and while it might be infuriating, there’s nothing they can do about it. (Technically, they could leave Twitter, but the platform has no meaningful competitors, and jumping ship means losing all the followers they spent years cultivating.)

Meanwhile, people familiar with enterprise cybersecurity know that while Twitter’s security issues might be particularly dramatic and dangerous, they’re also frighteningly commonplace. Twitter’s struggles to enforce its own security policies are playing out in companies every day, especially when guarding against insider threats.

So while this news might be horrifying, it is not shocking. With that in mind let’s take a look at some of Mudge’s accusations and ask ourselves how common they are, and how we can start being more honest about how we manage these risks.

Mudge’s Claims About Twitter Security

What’s remarkable about Mudge’s accusations is that Twitter wasn’t just failing to guard against hypothetical scenarios; they were failing to patch holes that had already led to breaches.

For context, Twitter has a long history of data breaches, and the common thread in the majority of them is Twitter employees. In some cases, hackers guessed or spearfished employee login credentials and used the access to steal data or take control of accounts. In other cases, employees were able to inappropriately access private information, including the employee found guilty of spying on behalf of the Saudi Arabian government.

In 2011, Twitter agreed to a settlement with the FTC in which they promised to beef up security. Yet controlling employee access proved to be more challenging than they anticipated or disclosed.

Endpoint Security

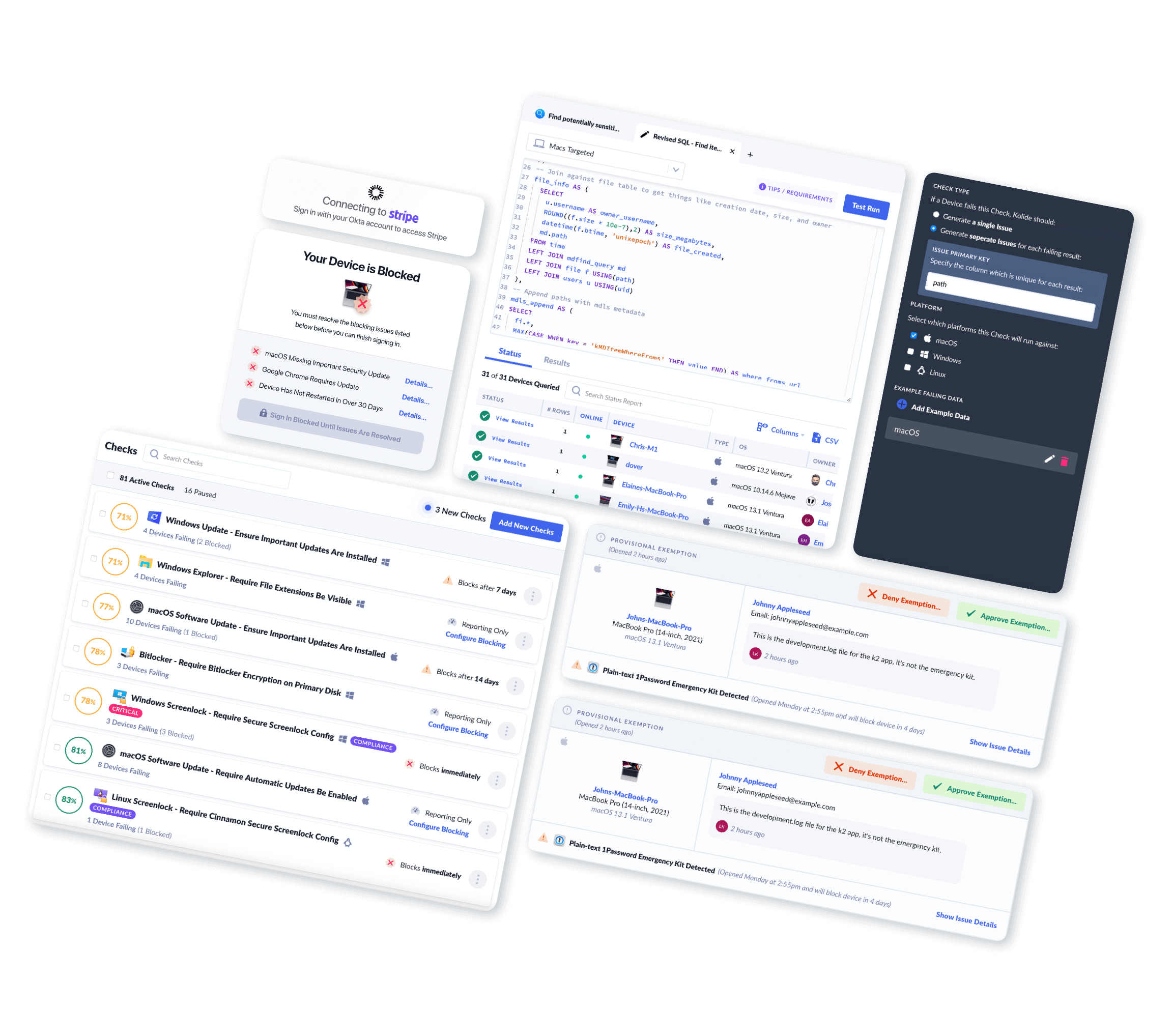

The claim: The whistleblower complaint alleges that Twitter failed to ensure employee devices were in compliance with security policies. They installed endpoint security software across their fleet of laptops, but that software either could not or did not enforce those policies.

“[Redacted] stated that 92% of Twitter employee computers had this security software installed, implying a high level of security. But in truth, the endpoint software did not actually provide or constitute security on its own. Rather, the endpoint software’s primary function was to evaluate whether the employee computer had basic security configurations enabled. And most importantly, the software on these endpoint computers was reporting dire problems. Over 30% of the more than 10,000 employee computers were lacking the most basic security settings, such as enabling software updates.”

-From the whistleblower complaint (emphasis ours)

What it means: We don’t know what specific endpoint security software Mudge is referring to, but it’s reasonable to assume the company had some form of MDM for employee laptops. (We can infer this, in part, because the complaint specifically calls out the absence of MDM on mobile devices.) In theory, this should have enabled the security team to enforce updates remotely, but clearly, this software had either been disabled or simply wasn’t running properly on a large percentage of devices.

How common is this problem? In a word: very. In a 2022 survey of IT and security professionals, the majority of respondents agreed that their single biggest problem is keeping software current. On top of that, “Nearly two-thirds of respondents said lack of visibility into endpoints is their organization’s most significant cybersecurity vulnerability.”

Keeping employee devices up-to-date is one of the most important responsibilities of any security team, but the rise of remote work, BYOD policies, and the sheer number of competing endpoint agents on each device complicates visibility and accountability.

Access Control

The claim: Mudge alleges that Twitter gave far too many employees the ability to access sensitive data and make changes to the platform itself.

“…according to expert quantification and analysis in January 2022, over half of Twitter’s 8,000-person staff was authorized to access the live production environment and sensitive user data. Twitter lacked the ability to know who accessed systems or data or what they did with it in much of their environment.”

What it means: This situation had already led to a major security breach in 2020, when a teenager who spearfished employee credentials was able to achieve “God mode” and tweet from accounts including Barack Obama, Bill Gates, and Kanye West. Elsewhere in the claim, Mudge sketches out a much more frightening scenario.

On January 6, in the midst of the Capitol riot, he claims he tried to seal off Twitter’s production environment, to prevent any internal bad actors from adding to the chaos. He was informed that this was impossible since “There were no logs, nobody knew where data lived or whether it was critical, and all engineers had some form of critical access to the production environment.”

How common is it? Security in general is moving toward a “zero trust” paradigm, in which access to sensitive assets is rigorously controlled and monitored, and even employees with the most privileges must continually authenticate. But this is a slow transition, and it’s intimately related to the endpoint issue we discussed above. In other words, if you have no way of knowing whether employees are storing sensitive information on their devices (whether that’s customer data or their own access keys), you have no way to control access to it.

Shadow IT

The claim: Some of the murkiest allegations in the complaint state that Twitter employees routinely installed surveillance software at the behest of third parties.

“Although against policy, it was commonplace for people to install whatever software they wanted on their work systems. Twitter employees were repeatedly found to be intentionally installing spyware on their work computers at the request of external organizations.”

What it means: We’re not going to speculate on what Mudge means by “external organizations,” although its potential implications are obviously troubling. But putting that aside, employees installing “whatever software they want” is already a significant eyebrow-raiser.

How common is this problem? “Shadow IT”–employees secretly working on unapproved software or devices–is a widespread problem. But, as we’ve written before, the vast majority of workers who use Shadow IT don’t have any nefarious intentions; they’re just trying to do their jobs.

Nevertheless, the presence of Shadow IT in an organization speaks to some larger problems:

- Lack of effective communication from security and IT teams about security risks

- Employees who feel they aren’t given the tools they need and with no clear way to ask for them

- No visibility into endpoints to reveal the presence of unapproved tools

The Twitter Whistleblower Should Sound Alarms for Everyone

We’re not bringing up the pervasiveness of these security issues in an attempt to shame or scold anyone. (Not even Twitter.) Rather, this is an opportunity to acknowledge that addressing these problems is incredibly challenging, even for companies with good intentions and vast resources.

We run into trouble when we pretend–to auditors, regulators, customers, and ourselves–that we’ve got security all figured out, and sweep any evidence to the contrary under the rug.

At the end of the day, Mudge’s most damning indictment of Twitter isn’t the security challenges they faced but their unwillingness to look those challenges in the face. That’s a tough thing to do, but it becomes much easier once you accept that the end goal of security is not a world without risk; it’s a world in which everyone understands risk well enough to make informed decisions. That’s just honest.