2023's Least and Most Secure Authentication Methods

Any security professional will tell you there’s a simple way to keep data secure: encase it in concrete and toss it in the ocean. Unfortunately, while that approach will keep hackers out, it’ll also lock out legitimate users. The next best thing is to set up authentication protocols that don’t make access too easy for hackers or too tough for end users.

Broadly, there are three best practices that play into that decision. You need to:

Reflect current opportunities and threats: Companies have to choose authentication methods that balance (sometimes competing) needs for security and usability, which is challenging since the right choice might be different in 2023 than it was a year ago. The state of the art constantly shifts in response to breakthroughs by both vendors and hackers—like this guy that beat a bank’s “secure” voice recognition software with a free AI tool.

Choose the appropriate level of security for the user and resource. The “right” approach to authentication has to be tailored to the resources it’s designed to protect and the users trying to access it. The same company might use different forms of authentication for its customers, workers, and contractors. And even within the category of workers–which we’ll be primarily focusing on in this blog–you might use tougher authentication for senior engineers who access your source code than, say, a marketer who just writes about it.

Don’t rely on a single form of authentication. None of the authentication methods we’re about to go over should be considered in isolation but as part of a holistic approach to verifying user and device identity and security.

Tl;dr: no matter your mix of users and resources, choosing authentication methods isn’t about picking the single, infallible option. It’s about building a multi-layered approach that makes hacking more trouble than it’s worth and gives access to the right people at the right time.

The Three Types of Authentication Factors

Most security practitioners sort authentication methods into three categories, called factors. (As we’ll see, they don’t all fit neatly into a single bucket, nor does the number of factors have to be capped at three, but it’s still a good starting place.)

A knowledge factor is something you know. Passwords, PINs, and security questions are all knowledge factors.

A possession factor is something you have. Security cards, external hardware dongles, and even devices themselves fall into the possession factor bucket.

An inherence factor is something you are. These are biometrics, like fingerprint readers, facial scanners, etc.

A security best practice is to combine multiple forms of user authentication into a multifactor authentication (MFA) protocol. And there’s a reason it’s not called multi-method authentication.

The goal of MFA is to pull from two or more factors so a threat actor can’t gain access using a single attack vector. For example, a hacker can swipe your password and security question answers (knowledge) in a single spearfishing attack. With phishing-resistant MFA, the thief would also need your fingerprint (inherence) or hardware fob (possession) to breach your system.

Lastly, all methods within a factor aren’t equally secure. For instance, a one-time code from an authentication app is considered safer than an easily stealable SMS-delivered password. That’s what we’ll break down next.

Least Secure: Passwords

Pros: Familiar to users; simple UX; easy to deploy

Cons: Vulnerable to many types of attacks; attractive to threat actors

Best suited for: Primary authentication for non-sensitive assets; securing internal docs protected externally by other methods; customer accounts with strong secondary authentication factors

In 1961, the first computer passwords protected private files and logged user time on MIT’s Compatible Time-Sharing System (CTSS). Late one Friday night in 1962, MIT researcher Allan Scherr entered a punch card into CTSS, asking the machine to print all the passwords. The system complied, and the first password theft was a success.

Scherr may have been the first to break into a computer via stolen passwords, but he’s certainly not the last. Compromised credentials consistently rank as the most common way hackers breach organizations.

Despite their inherent vulnerabilities, passwords are the most popular authentication factor. That’s mostly down to their simple deployment (no hardware needed) and lack of a learning curve for users. But tech giants, authentication providers, and government agencies are creating a path to a passwordless future.

The vulnerabilities of passwords

In fairness to passwords, they really aren’t the problem here–we are. Users fall for phishing attacks and practice poor password hygiene, while companies often fail to protect their databases of passwords or block credential-based attacks. And hackers are only too happy to exploit these human failures.

Here are a few examples:

Social engineering attacks: This is really a weakness of all knowledge factors: if something can be known, it can be phished. Bad actors use phishing emails, create fake websites, and pretend to be tech support to trick users into exposing their credentials. Even though users get regular reminders to guard against these attacks, we still fall for them. In 2021, 86% of organizations knew at least one person on their team had clicked a phishing email.

Brute force credential-based attacks: Thieves use a variety of methods to either guess user credentials (password spraying) or apply known credentials to multiple websites (credential stuffing). Brute force attacks, made possible by weak passwords, are the number one threat to remote access protocols like Microsoft’s RDP

Password storage breaches: Like Allan Scherr’s credential caper in the 1960s, threat actors continue to swipe vast numbers of credentials (usually to sell on the dark web). This wouldn’t be an issue if organizations who maintain passwords properly hashed and salted them, and yet here we are.

Man-in-the-middle attacks: Hackers sometimes steal passwords by hijacking communication channels using DNS spoofing or WiFi eavesdropping. While not as common as they once were with the advent of stronger cryptography, MiiM attacks are evolving with new technology, like drones equipped with proximity penetration kits. (But hey, at least the hackers have to work harder now.)

Passwords as part of MFA

While it’s not feasible for every company to give up passwords cold turkey, you should at least avoid pairing them with another knowledge factor. For example, companies sometimes use security questions as a password recovery method, but these are even less secure than passwords. Not only are they vulnerable to the attacks listed above, they’re based on information—like your favorite pet’s name—that hackers can find after 10 minutes of social media sleuthing.

Single Sign-On and password managers aren’t a complete fix

At this point, it’s widely accepted that passwords are inherently insecure and should be phased out. Even Apple, Google, and Microsoft can agree on that, and they’re helping usher in a passwordless future with the introduction of passkeys. Still, it will take years before we rid ourselves of passwords, and in the meantime, password managers and Single Sign-On (SSO) can help mitigate some of their risks.

Password managers like 1Password and LastPass give users strong passwords and a safe place to store them. SSO tools reduce password fatigue by allowing users to enter one set of credentials to access multiple resources.

But here’s the rub: the underlying vulnerability remains if you’re logging into a password manager or SSO app with a password. So these tools are currently1 exposed to all the same phishing and MiiM attacks as the resources they protect. And of course, outsourcing risk to any vendor creates its own risks. Hackers can break into password management software, as evidenced by a pair of breaches at LastPass.

More Secure: One-time Passwords

Pros: Some versions are secure secondary authentication factors; they’re inexpensive to deploy

Cons: SMS OTPs are vulnerable to attack; users need to keep up with an extra device

Best suited for: Simple secondary authentication; customer users (SMS OTPs), or remote professional users (authenticator apps and security fobs)

One-time passwords (also called one-time codes or dynamic passwords) are unique, algorithmic-generated codes. They’re usually used as a step-up authentication method if a user takes a certain action (like initiates a transaction) or if there’s something fishy about a login attempt (like if it’s from an unrecognized device).

OTPs can be delivered in a variety of ways, some of which require a secondary device and are more like possessions factors than knowledge factors.

- SMS

- Authenticator apps

- Hardware security tokens (smart cards and fobs)

More secure OTPs require a second device or piece of hardware, which is less vulnerable to interception. But once a user has the code, it becomes a knowledge factor that can be phished, just like a password. Ideally, they should be paired with a biometric factor for true MFA.

SMS and email OTPs are weaker

It’s understandable why OTPs delivered via SMS or email are popular. Anyone with an email account or a cell phone can use them without downloading yet another app.

On-demand OTPs are also popular with threat actors. Hackers can intercept OTPs through weaknesses in SMS or email delivery methods.

For example, in SIM swapping attacks, thieves convince a cell service provider to switch their victim’s number to a different SIM. Then there’s the MiiM-style tactic where hackers eavesdrop on their victim’s texts via a weakness in the ss7 protocol—the one that connects mobile carriers.

OTPs sent by email are exposed to a broad attack surface. Email service providers, wireless networks, and internet protocols are all points of ingress for industrious hackers. Then think about the multiple devices you use to read emails. The same OTP could be sent to your cell phone, a work laptop, a home computer, and a smartwatch.

The codes themselves aren’t very secure either. Both SMS and email OTPs are plain text. Once a hacker has them, they can go right to resetting the user’s password.

Like passwords, these OTPs may be on their way out. In 2020 Microsoft published an article calling for the move away from text as an authentication method. NIST deprecated SMS OTPs in 2016. And the FBI warns against using them for MFA.

Authenticator tokens are a better OTP option

Authenticator tokens generate time-based OTPs locally via an app or a device. They’re not delivered over a network, so SIM switching, ss7, or internet eavesdropping attacks are useless. However, they are still vulnerable to phishing or the physical theft of the device itself.

Hard tokens are external devices, like a fob or dongle with a small screen. The token generates an original TOTP for each login and presents it to the user on a small screen.

Soft tokens are apps, like Microsoft Authenticator, that exist only as software. Like hard tokens, authenticator apps produce unique TOTP codes for each authentication request.

Okta Verify also functions as an authenticator app built into Okta’s larger MFA function. Users first log in to their Okta account with a password or biometric, then confirm that they possess their device by entering the app-generated code.

In rare cases, hackers have breached authentication app providers. Authy, for example, was hacked via its parent company Twilio in 2022. The “sophisticated social engineering attack” allowed hackers to add new devices to 93 different Authy accounts.

More Secure: Biometrics

Pros: Secure method of primary authentication; user convenience; available on many devices

Cons: Can’t be reset if compromised; privacy concerns; low-tech versions can be spoofed

Best suited for: Employee and customer authentication, particularly for sensitive resources

Biometric authentication methods rely on something you are. That makes them hard to steal, difficult to misplace or share, and impossible to forget. Users are comfortable with them, and they increasingly come built-in on our devices. For all these reasons, biometrics are the heir apparent to passwords to become the default authentication method.

But the immutable and personal nature of biometrics is its biggest Achilles heel. Once someone gets ahold of your biometric data, you can’t just reset it like a password. Gathering and storing personally identifiable information raises all sorts of privacy concerns and the racial and gender-based shortcomings of these tools introduce a potential for bias. Also, some forms of biometrics are much more secure than others. For instance, most security experts are wary of voice recognition, which can be tricked by a free AI tool.

All this to say: biometrics can be a formidable part of your MFA system, but hey’re not foolproof and they should be handled with care.

Fingerprint scans are secure when data is stored properly

The unique ridges on our fingertips provide a convenient way to verify user identity. That’s why so many devices let us tap to log in.

Still, it’s possible to spoof these scanners. One way to hack a fingerprint scan is to lift a physical print (à la CSI) and create a mold. It’s how a German computer club beat the iPhone’s first fingerprint sensor two days after it launched. That could put a single device at risk if stolen. But in practice, it’s difficult to recreate a fingerprint, especially with newer ultrasonic scanners.

Like passwords, fingerprints need to be stored securely. A breach in 2019 exposed over one million prints, showing why you shouldn’t create a trove of unencrypted biometric data. Most devices don’t. The iPhone, for example, stores fingerprint data locally. Also, most biometric data is, or should be, stored as numeric data, not images. So even if a hacker gets ahold of it, they’d need to reconstruct the mathematical representation to make it work.

The arrangement of veins just below our skin’s surface is as unique as fingerprints. Near IR imaging sensors can map out these distinctive patterns, creating a new option for authentication called vascular biometrics. Unlike prints, we don’t leave our vascular map behind every time we tap a phone screen. And a loss of skin integrity doesn’t leave vascular scans unviable. The real barrier to a wider rollout is the high cost of VB scanners. If the technology is made more affordable, it would be a great option for user authentication.

Facial recognition continues to improve

Facial recognition is a popular authentication option for MFA. However, early face scanners weren’t hard to fool. But as with all forms of authentication (except maybe security questions), as attacks get more sophisticated, so does the technology to thwart them.

At first, smartphone facial recognition scanners relied on the 2D, front-facing cameras already available on the device. Hackers quickly proved that a photograph—even one as low-tech as a passport photo— could spoof that technology.

Apple’s FaceID uses three infrared technologies to make a topographical map of your mug. 3D facial recreation is much harder to fool than its 2D predecessor. Vietnamese researchers did it with a 3D-printed mask. And you could get a false positive from someone who looks a lot like you.

More recently, most facial recognition tech has added “liveness” tests, which make it harder to bypass them with a 2D photo. Like a visual Turing test, the software attempts to prove it’s encountering a physically present human being. A smile or blink may be all it takes to prove you’re not just a printed Facebook pic.

Let’s face it, the odds of your evil identical twin breaking into your device are slim, and most hackers won’t go through the trouble of printing a “you” mask. That’s why 3D facial scans are secure for most applications, especially if they’re backed up by another authentication factor.

Behavioral biometrics add ongoing security, but at a cost to privacy

Behavioral biometric software builds unique profiles of users based on measurable behavior patterns, like how you type. Your keystroke rhythm, mouse usage, typing speed, and length of time holding keys down form a recognizable pattern that’s unique to you and hard to replicate.

Behavioral biometrics are generally used as continuous authentication measures. That is, they assess your behavior after you’ve logged in and flag any deviations from your norm. It’s a way to verify that someone—or more likely, a non-human program—hasn’t hijacked your device. But there’s a troubling potential for this type of surveillance to cross the line into bossware or public surveillance.

Lawmakers and privacy advocates are scrutinizing biometrics. Some laws prevent companies from profiting off of collected biometric information. Several lawsuits have accused companies of abusing this data. As Jennifer Lynch, a senior lawyer for the Electronic Frontier Foundation, told The New Yorker, “It’s a very small leap from using this to detect fraud to using this to learn very private information about you.”

Most Secure: Hardware Keys

Pros: Immune to MiiM, phishing and keylogging attacks

Cons: Inconvenience of carrying an external device; a physical key that can be stolen

Best suited for: Workforce authentication, especially for highly sensitive data; remote and in-office employees

External hardware keys, like Yubikeys, are among the strongest authentication factors available. Also called FIDO keys, they generate a cryptographically secure MFA authentication code at the push of a button. FIDO keys differ from OTP hardware because they send codes directly to the device via a USB port or NFC connection. That gives hackers no chance to phish the code or steal it in a MiiM or keylogging attack.

FIDO keys are very secure devices. They don’t hold any personal information, and cracking them is beyond the skill of most hackers. So they’re an excellent method to bundle with an identity provider like Okta and a device health app like Kolide. With all three in place, a hacker would need the user’s laptop or phone, a fingerprint, and the FIDO key to pass authentication.

The trade-off for hardware keys is the inconvenience of toting around another device. Some users may leave their key plugged in all the time, which renders it useless if a thief snatches both the device and the key. Losing your key can also be a pain, and replacing them is expensive for companies at scale. That’s why most organizations reserve these keys for users who access particularly sensitive resources.

Most Secure: Device Authentication and Trust Factors

Pros: Proves that the device is known and secure

Cons: Must be used in conjunction with user authentication

Best suited for: Employee and contractor authentication

So far, we’ve talked about methods to verify a user’s identity. But it’s also important to verify that you recognize (and trust) the device they’re using. Otherwise, a well-meaning employee could unknowingly access your network with a malware-infected laptop. Or a threat actor could use a set of stolen credentials to impersonate an employee from the other side of the world.

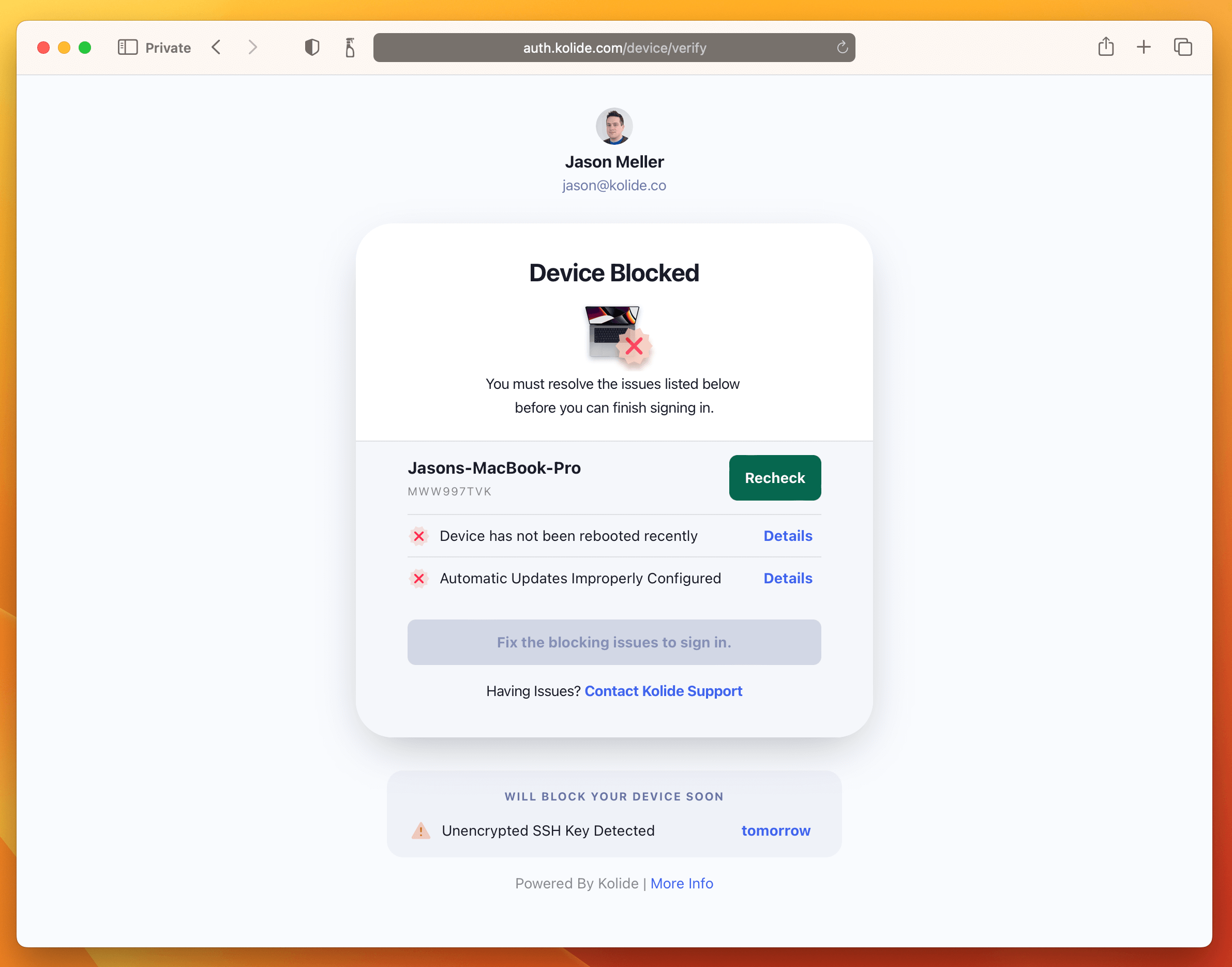

Device authentication factors ensure that only approved devices can log in. Some versions operate in a go/no-go state, meaning it’s enough to prove that the device is known. Others add an additional layer of protection: checking not only that a device is familiar, but that it’s in a secure state.

Certificate-based authentication

In certificate-based authentication (CBA), a device presents a digital certificate to a server for verification. Many identity providers, such as Okta and Azure, enable CBA as part of their MFA product.

CBA is considered very secure because it’s based on public/private key cryptography, where the private key acts as a combination that never leaves the device.

CBA offers some distinct advantages:

- It’s usable for all endpoint connections, including IoT devices without a direct user

- It allows mutual authentication of both the server and device

- It’s infinitely extensible because contractors, vendors, and partners can all be issued certificates

Still, CBAs aren’t infallible. Hackers have breached certificate authorities, giving them free reign to create phony certificates. Thieves have also swiped existing certificates.

On-device agents that verify device health

Certificates tell your network that a device is known, but that’s only half the battle. What if that “trusted” laptop is missing a critical security update or is running a non-genuine version of Windows? Ensuring that a device is secure is a crucial part of Zero Trust Architecture (ZTA), and one that often gets neglected.

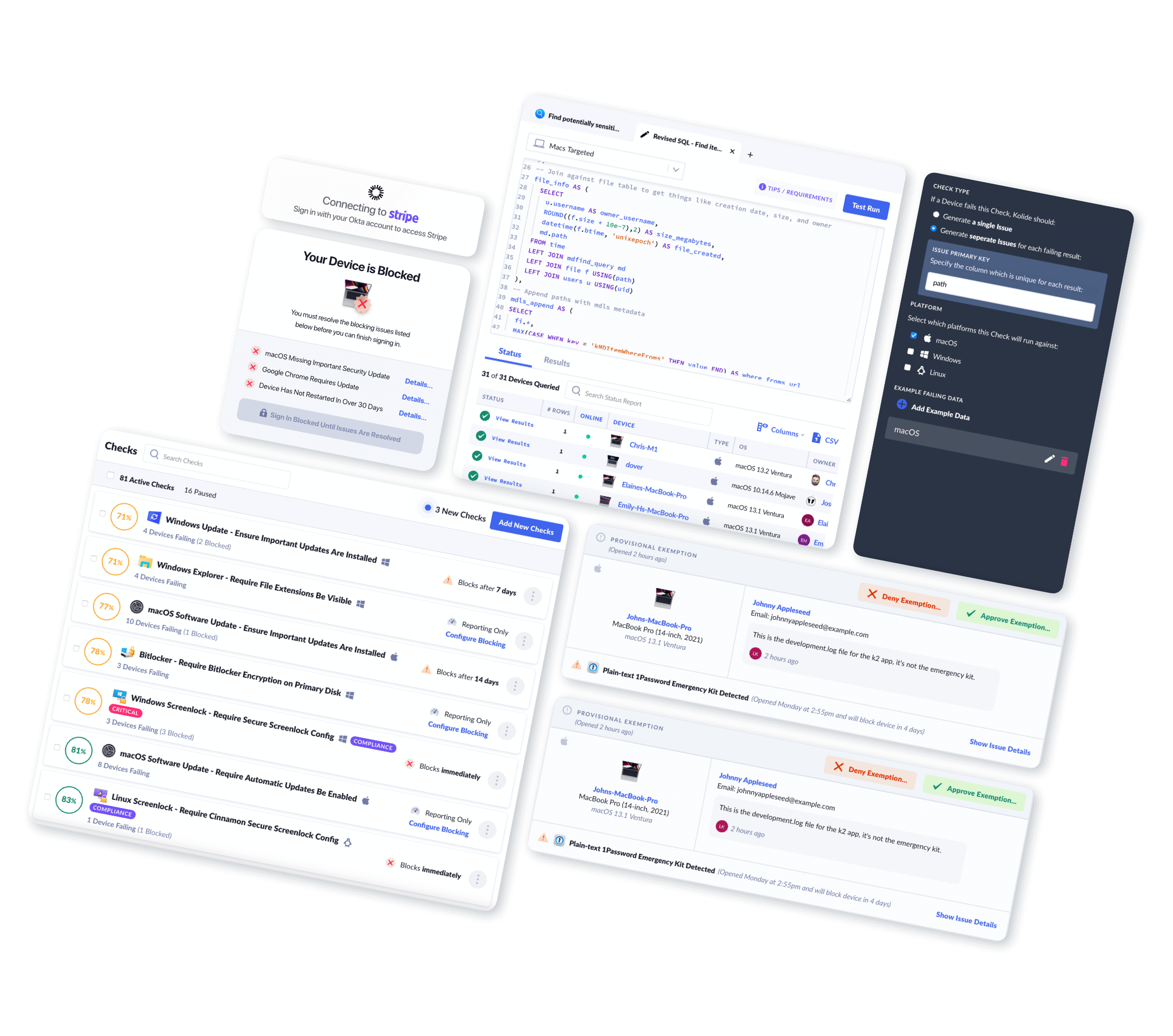

But software like Kolide makes device health part of the authentication process. Like a CBA, the presence of Kolide’s agent on a device works as a possession factor (if a device doesn’t have Kolide installed, it can’t log in).

But Kolide goes further, because it also scans for compliance issues before letting a user log in, so it can also be understood as a “posture factor.” Kolide’s device trust solution works in harmony with Okta’s process to ensure that both user and device are secure. (For more on how this works, read our blog!)

When Designing MFA, Don’t Forget the Human Factor

Here’s the note we’ll leave on: a good approach to MFA doesn’t just consider the hackers it’s designed to keep out. It accounts for people who need to be let in. Humans make mistakes. They have work to get done. And by and large they want to do the right thing. What often goes wrong in MFA (and security more broadly) is that it treats users as enemies rather than allies.

Keep these three points in mind to help users become the hidden factor in your MFA.

Make authentication simple. Low-lift MFA leads to better security habits. Tell a user to create, remember, and frequently update credentials, and they’ll find shortcuts that put your company at risk.

Protect privacy. Even with the best intentions, security initiatives can erode user privacy. To earn and keep employees’ trust, collect the minimum amount of information, be transparent about how it’s used, and safeguard it against outside threats. That’s all part of our belief in Honest Security.

Create a security culture. When properly equipped with tools and knowledge, users will behave more securely. So it’s worth investing the time to educate them about security, instead of implementing changes without their knowledge or consent.

So stop fantasizing about a user-free authentication solution, and start building one that puts them front and center.

Want to see how Kolide works as an authentication factor? Watch an on-demand demo to see our agent in action.

-

1Password has joined the FIDO alliance and announced a plan to support passwordless authentication. Some SSO providers also allow passwordless login for their users. ↩